Welcome back to the architecture deep dive! Autumn 2023 marks a turning point in the way users interact with SAP systems. SAP has officially introduced Joule – the generative AI copilot that will be deeply integrated into the SAP ecosystem (S/4HANA, SuccessFactors, Ariba).

But beyond the impressive marketing demos, a critical question immediately arises for us Enterprise Architects and CISOs: How does it work under the hood? Are our highly sensitive financial and HR data simply streamed to OpenAI via API? In this post, we dissect the architecture of Joule and analyze how SAP manages to deliver contextualized AI without violating the principles of Zero Trust and data sovereignty.

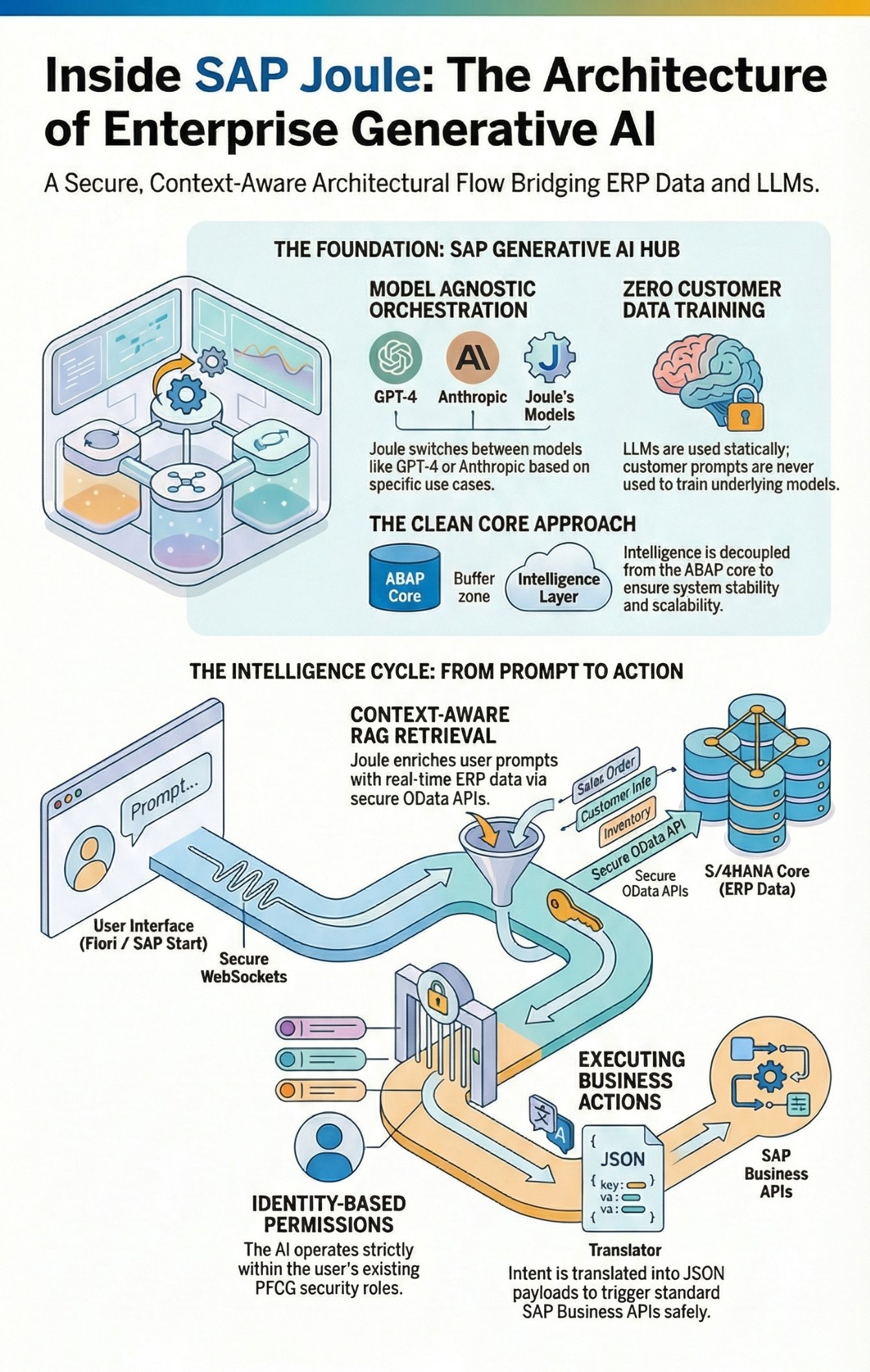

The Architectural Core: SAP Generative AI Hub on the BTP

Joule is not a monolithic program hardcoded into the ABAP core of S/4HANA. The intelligence sits decoupled on the SAP Business Technology Platform (BTP). The technical centerpiece that makes Joule possible is the SAP Generative AI Hub.

The AI Hub acts as an architectural abstraction layer (middleware) between the SAP business logic and the Large Language Models (LLMs) of the hyperscalers.

-

Model Agnosticism: SAP does not bind itself exclusively to one provider. Via the AI Hub, Joule orchestrates requests to Microsoft Azure OpenAI (GPT-4/GPT-3.5), Aleph Alpha, or Anthropic depending on the use case.

-

No Model Training with Customer Data: This is the most crucial architectural decision for enterprise security. The foundation models are used statically. Prompts sent to Azure OpenAI via the AI Hub are strictly not used to train the underlying models (contractually guaranteed via hyperscaler agreements).

How Joule Understands SAP Context: RAG and Semantic Layer

A generic LLM knows nothing about a customer's specific custom table T001 or an employee's leave entitlement in SuccessFactors. To make Joule intelligent, SAP uses a Retrieval-Augmented Generation (RAG) architecture coupled with SAP's semantic data model.

-

User Prompt: The user asks in the Fiori Launchpad: "Why did the margin for Product X drop in Q3?"

-

Context Retrieval: Joule forwards the request to the backend (e.g., S/4HANA) via OData/REST APIs. In doing so, Joule always acts within the context of the logged-in user (Identity Propagation via SAP Cloud Identity Services). The agent possesses exactly the same authorizations (PFCG roles) as the physical user.

-

Data Grounding: The backend returns the hard operational data (numbers, table results) to the AI Hub.

-

Prompt Enrichment: The AI Hub dynamically assembles a meta-prompt containing the user's question and the retrieved ERP data.

-

LLM Generation: Only this enriched but anonymized (PII-filtered) prompt is sent to the LLM, which generates a natural, human-readable response from it.

From Transactions to Actions: Joule as an Executing Agent

Joule is not just a glorified search engine (Read-Only). Its true strength lies in the architectural integration of Action Frameworks.

When Joule suggests: "Should I create a Purchase Requisition (PR) for the missing material?", the architecture accesses the standardized SAP Business APIs (OData V4). Joule translates the user's intent into a clean JSON payload and fires an HTTP POST request against the S/4HANA core. Here, too, strict SAP standard validations (BAPIs, lock mechanisms, authorizations) apply. A flawed AI command cannot corrupt the system, as the application layer validates the request just like a regular user input.

UI Integration: SAP Start and Fiori

Technologically, Joule is seamlessly integrated into SAP Start (the new central entry point for cloud solutions) and the classic Fiori Launchpad. The UI integration is realized via Web Components that communicate with the backend on the BTP via secure WebSockets. This makes Joule "Context-Aware" – the copilot knows exactly which data record the user is currently navigating based on the active Fiori app (e.g., "Manage Sales Orders").

Conclusion for Enterprise Architects

With the introduction of Joule in October 2023, SAP ushers in the end of purely transactional user interfaces (/nVA01). The architectural decision not to hardcode AI into the on-premise cores, but to orchestrate it centrally via the Generative AI Hub on the BTP, is a masterpiece. It guarantees scalability, forces customers onto the "Clean Core" path, and allows SAP to swap LLM providers in the background at any time without requiring adjustments to the customer architecture.

For us architects and Basis admins, this means: The topics of SAP Cloud Identity Services (IAS/IPS), OData API Management, and the security of BTP Subaccounts are definitively becoming absolute mandatory disciplines. Anyone still operating their ERP system in isolated on-premise silos today is cutting themselves off from the biggest leap in productivity of the current decade.