Welcome back to the architectural control center! As a Senior SAP Technology Consultant and long-time observer of SAP infrastructure evolution, today I am dedicating myself to the most strategically important decision IT leaders currently have to make: Which hyperscaler is the optimal foundation for the "Intelligent Enterprise"? The financial data speaks volumes. In the first quarter of 2023, SAP recorded a 24% increase in cloud revenue, while free cash flow nearly doubled. The on-premise era, dominated by monolithic data centers and static 5-year hardware sizings, is history.

With strict adherence to deep detail, today we profoundly analyze the pros and cons of the major hyperscalers (AWS, Azure, GCP) and seamlessly link classic SAP Basis knowledge with modern cloud-native architectures, storage technologies, and code configurations.

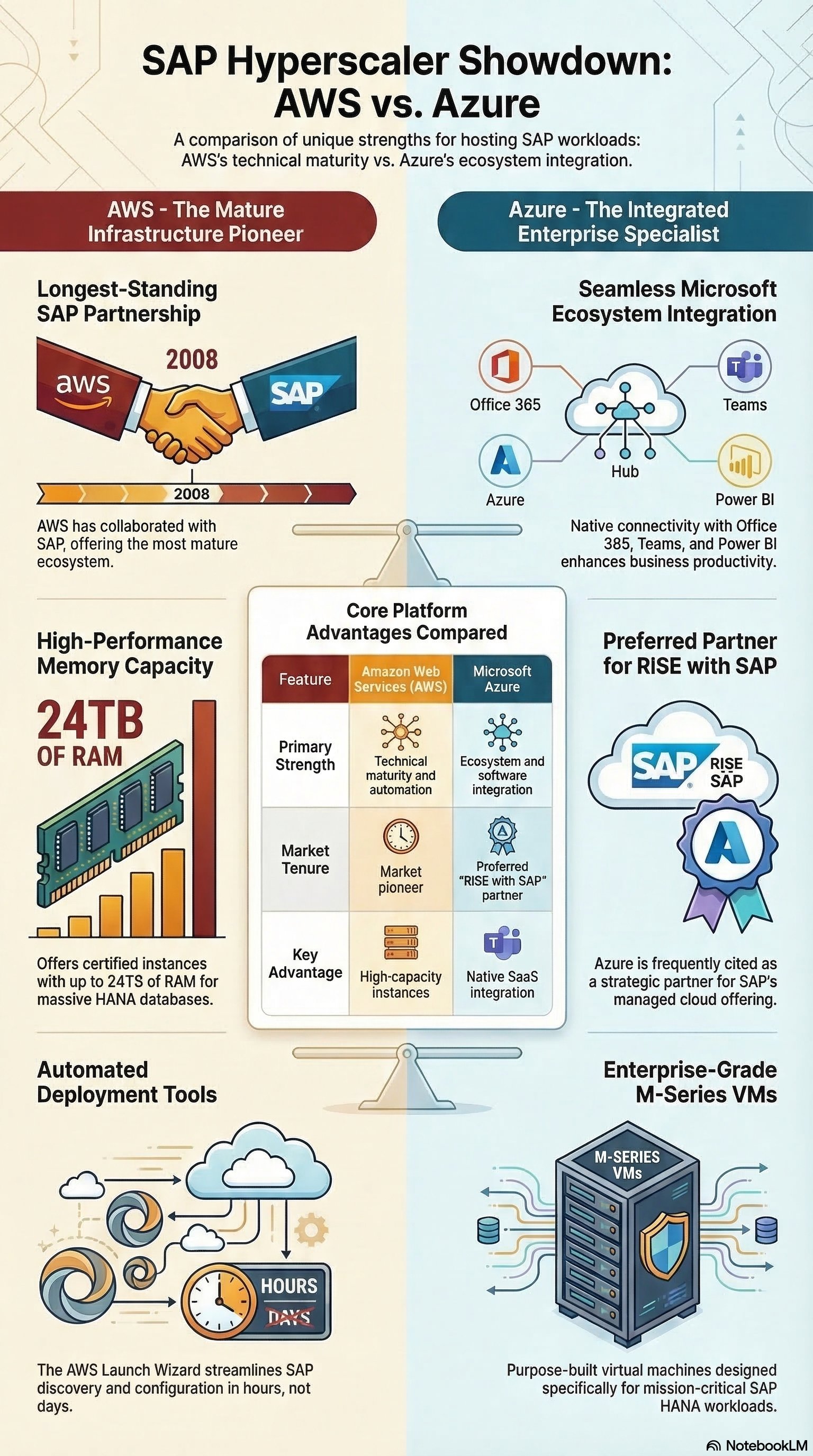

AWS: The Pioneer of SAP Cloud Architecture

Amazon Web Services (AWS) was the first provider to certify SAP production environments back in 2008. This historical maturity is reflected in an extremely robust and granular IaaS (Infrastructure as a Service) architecture.

Advantages of SAP on AWS:

-

AWS Nitro System: The absolute architectural gamechanger. The Nitro architecture offloads hypervisor functions (network I/O, storage routing) onto dedicated hardware offload cards. The enormous advantage: The Amazon EC2 instances dedicate nearly 100% of their physical CPU and RAM resources exclusively to the SAP application. A hypervisor overhead de facto does not exist.

-

Limitless Scalability (Compute): For massively memory-intensive SAP HANA In-Memory databases, AWS offers specialized EC2 High Memory Instances (u- instances based on Intel Xeon Scalable processors). These deliver bare-metal performance for scale-up databases from 3 TB up to 32 TB RAM in a single instance. For SAP BW/4HANA (OLAP), scale-out clusters with up to 100 TB RAM can be provisioned.

-

Storage Performance: Providing high-performance block storage via Amazon EBS (Elastic Block Store) with

io2 Block Expressorgp3volumes guarantees strict adherence to SAP KPIs (latencies strictly under 1ms for the HANA log write/hana/log) to prevent sync delays.

Disadvantages of SAP on AWS:

- Lower Native Business Ecosystem Integration: Unlike Microsoft, AWS lacks the seamless, out-of-the-box integration with ubiquitous corporate tools (like Office 365, Teams, or a dominant Active Directory), which can require additional middleware effort for end-to-end process integrations.

Azure: The Power of Integration and Enterprise Storage

Microsoft Azure positions itself as a massive competitor through the "Project Embrace" partnership and profound technological innovations.

Advantages of SAP on Azure:

-

Azure NetApp Files (ANF): This is arguably Azure's most significant technical differentiator in the storage sector. While AWS often relies on block storage, ANF is an enterprise-grade file storage service based on bare-metal NetApp ONTAP fabrics. ANF enables shared storage via NFSv4.1. The advantage for old Basis admins: Complex and error-prone Linux Pacemaker configurations with iSCSI LUNs or DRBD for SAP ASCS/ERS (ABAP SAP Central Services / Enqueue Replication Server) clusters are drastically simplified.

-

M-Series Virtual Machines & Write Accelerator: For highly scalable S/4HANA workloads, Azure relies on the M-Series (Mv2 and Mv3), which also provide up to 32 TB RAM. An enormous advantage at the architecture level is the Write Accelerator, applied to Premium Storage to guarantee sub-millisecond latencies for the critical

/hana/log. -

Native Ecosystem: Native integration with Microsoft Entra ID (formerly Azure AD), PowerBI, and M365 significantly reduces the effort for Single Sign-On (SSO) and data analytics.

Disadvantages of SAP on Azure:

- Complexity in Storage Design: Choosing between Premium SSD v2, Ultra Disk, and Azure NetApp Files requires extremely precise architectural sizing. Misconfiguring the storage tiers here quickly leads to significant performance bottlenecks or unexpected cost spikes.

GCP: The Innovator in the Background

The Google Cloud Platform (GCP) is often cited as the third player but stands out with an outstanding unique selling point.

Advantage of SAP on GCP:

- Live Migration Technology: GCP features a patented live migration at the compute level. This allows SAP Basis administrators to move running, highly productive SAP HANA VMs to other hosts during physical hardware maintenance in the data center completely without downtime.

Evolution of Data Backup: Old Backup Knowledge vs. Cloud-Native Backint

We all still know the classic Basis setup: Backups were written locally to file systems via tools like BR*Tools and outsourced to physical tape libraries via Tivoli Storage Manager (TSM) or Commvault. The Recovery Time Objectives (RTO) were extremely long.

Today, this outdated design is replaced by the SAP HANA Backint API, which pumps database streams directly into cloud object storage without file system detours. This is where the specific pros and cons of the platforms emerge:

- AWS: Uses the AWS Backint Agent for SAP HANA. Advantage: Direct C++ stream into Amazon S3, saving expensive EBS storage space. Disadvantage: Must be manually configured as an agent on the EC2 instance.

To avoid cutting any technical information, I am documenting the mandatory configuration at the database level in the global.ini here:

[backup]

log_backup_using_backint = true

catalog_backup_using_backint = true

data_backup_parameter_file = /usr/sap/<SID>/SYS/global/hdb/opt/hdbconfig/aws-backint-agent-config.yaml

log_backup_parameter_file = /usr/sap/<SID>/SYS/global/hdb/opt/hdbconfig/aws-backint-agent-config.yaml

And the corresponding YAML mapping to the cloud storage (S3):

# AWS Backint Agent Configuration

S3BucketName: "arn:aws:s3:::my-sap-hana-backup-bucket"

S3Region: "eu-central-1"

S3StorageClass: "STANDARD"

KmsKey: "arn:aws:kms:eu-central-1:123456789012:key/alias/sap-backup-key"

LogFile: "/hana/shared/aws-backint-agent/aws-backint-agent.log"

-

Azure: Counters here with a massive operational advantage. Azure Backup for SAP HANA is a fully integrated "Backup as a Service" within the Azure Recovery Services Vault. Disadvantage: Tied to Azure-specific Vault restrictions, but an overwhelming advantage in administration, as Point-in-Time-Recoveries (PiTR) can be controlled down to the second directly in the Azure Portal – completely without your own backup infrastructure servers and with reliable 15-minute RPOs (Recovery Point Objectives).

-

GCP: Offers the Cloud Storage Backint agent, which scores with high-degree parallelization and encryption in Cloud Storage buckets.

SAP BTP and Artificial Intelligence: Native Hyperscaler vs. SAP AI Core

The paradigm shift from the monolithic ABAP core (Z-namespace) to the Clean Core forces architects to offload extensions side-by-side onto the SAP Business Technology Platform (BTP). The most explosive architectural conflict currently exists in the AI strategy:

-

SAP BTP AI Core: The advantage lies in the direct Business Data Context. The AI Core understands SAP semantics, Cloud Application Programming (CAP), and ERP authorizations out-of-the-box. Disadvantage: Often more expensive and restrictive in model selection than direct hyperscaler services.

-

Hyperscaler Native (e.g., Azure OpenAI): The immense advantage is the raw, unthrottled computing power of state-of-the-art LLMs like GPT-4. The current best-practice architecture design uses the HANA Vector Engine hosted in the BTP as a high-performance vector database (for embeddings of material masters and bills of materials), combined with a RAG Architecture (Retrieval-Augmented Generation) on Azure OpenAI. This delivers contextualized, hallucination-free answers directly from the ERP but requires a higher initial integration effort (disadvantage).

Conclusion

The detailed analysis of the architecture data unequivocally shows: The "one perfect hyperscaler" for SAP workloads does not exist. The decision must be made granularly based on the existing Enterprise Architecture.

AWS is the undisputed champion when it comes to sheer, hypervisor-free compute performance (Nitro System) and long-proven stability for massive scale-up and scale-out databases. Azure clearly dominates through its storage innovations (Azure NetApp Files for eliminating complex Pacemaker clusters) as well as its unprecedented native integration into the Microsoft ecosystem and PaaS backup services. GCP shines as a specialist with features like live migration to minimize hardware downtimes.

For modern SAP landscapes operating strictly according to the Clean Core principle, an intelligent Multi-Cloud Approach becomes the standard. The S/4HANA transaction system on Azure's M-Series or AWS's u-instances, historical data in S3 data lakes, and the orchestration of the latest RAG-based AI models via SAP BTP – that is the architectural masterpiece defining a future-proof "Intelligent Enterprise." The old Basis knowledge about storage latencies, Pacemaker clusters, and Backint interfaces is the key to designing these highly complex cloud networks flawlessly and with high performance.